Affiliate disclosure: Some links in this article may be affiliate links. We may earn a commission if you purchase via these links — at no extra cost to you. This does not affect our editorial coverage. Full disclosure.

Four Labs Ship Competitive Open-Weights Coding Models in 12 Days — GLM-5.1, MiniMax M2.7, Kimi K2.6, and DeepSeek V4

TL;DR

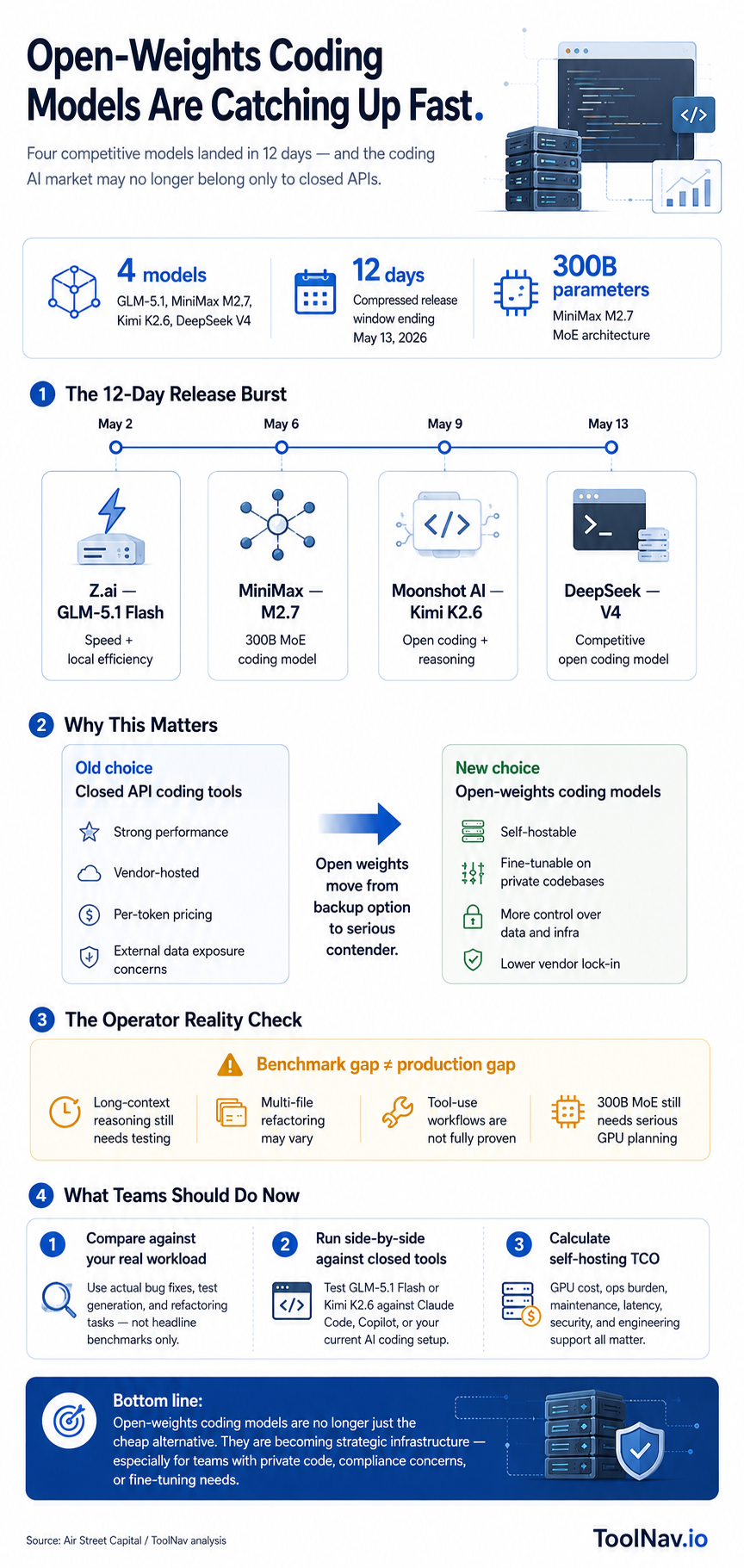

In a 12-day window ending May 13, four AI labs — Z.ai, MiniMax, Moonshot, and DeepSeek — shipped open-weights coding models that match or closely approach frontier performance on standard benchmarks. All four are downloadable and self-hostable. The compressed release timeline and open-weights distribution mark a significant shift in the competitive landscape for AI coding tools: capable coding models are now available outside closed API ecosystems.

4

open-weights coding models released in a 12-day window: GLM-5.1, M2.7, Kimi K2.6, DeepSeek V4

300B

parameters in MiniMax M2.7 (MoE architecture — selective expert activation reduces active compute)

12 days

compressed release window — each model benchmarks at near-frontier coding performance

Four AI labs released competitive open-weights coding models in a 12-day period ending May 13, 2026. The releases are Z.ai's GLM-5.1 Flash, MiniMax's M2.7, Moonshot AI's Kimi K2.6, and DeepSeek V4. Each is downloadable and self-hostable under permissive or research licences — no API key or subscription required to run them.

What the benchmark results show. All four models score competitively on SWE-bench Verified and related coding evaluation suites, reaching performance levels that were exclusive to frontier closed-source models six months ago. MiniMax M2.7 uses a mixture-of-experts architecture at 300 billion parameters, delivering high benchmark scores with selective expert activation that reduces per-token compute relative to its total parameter count. GLM-5.1 Flash emphasises speed and memory efficiency for local deployment. Kimi K2.6 and DeepSeek V4 target general coding and reasoning tasks with open weights at a scale that was previously impractical to distribute.

The open-weights distribution is the defining characteristic. These are not API-only models. Developers can download the weights, run them locally or on self-managed cloud infrastructure, fine-tune them on proprietary codebases, and modify them for specific use cases. The practical consequence for teams evaluating coding AI: the choice is no longer only between closed models from OpenAI, Anthropic, and Google on one hand and significantly weaker open alternatives on the other.

The 12-day compression reflects a broader dynamic. Lab-scale model development cycles have shortened substantially. Each of these four releases would have been a multi-month milestone in 2024; in May 2026, they shipped within a single fortnight. The State of AI May 2026 report from Air Street Capital, which tracked all four releases, notes this pace as a structural feature of the current development environment rather than a coincidental cluster.

What is not yet established. Benchmark scores on SWE-bench measure specific types of coding tasks — fixing GitHub issues, writing functions to spec — but do not fully capture long-context reasoning, multi-file refactoring, or the tool-use capabilities that distinguish the top closed models in daily development workflows. Independent developer evaluations comparing these models to Claude Code and GPT-4.1 on real-world tasks are ongoing. The benchmark gap has narrowed; whether the production gap has narrowed to the same degree remains to be tested.

Why It Matters

Open-weights models at frontier-adjacent performance change the calculus for teams choosing coding AI infrastructure. Until recently, the strongest coding models were available exclusively through closed APIs — meaning you depended on vendor uptime, accepted their data policies, and paid per token at vendor-set rates. Four self-hostable models that approach Claude Code and GPT-4.1 on benchmarks give engineering teams a genuine alternative: run on your own infrastructure, fine-tune on your private codebase, and operate without external data exposure. The 12-day release window also reflects how fast the open-weights ecosystem is moving. Teams that evaluated open-weights coding models six months ago and ruled them out may be working with outdated conclusions. The performance gap that once justified closed-API lock-in is narrowing rapidly.

Who's Affected

- — Engineering teams evaluating coding AI — all four models are available to test now without an API agreement or waitlist. Run SWE-bench evaluations against your actual codebase tasks rather than relying solely on published benchmarks.

- — Teams with data sensitivity requirements — self-hosted open-weights models mean code never leaves your infrastructure. GLM-5.1 Flash and Kimi K2.6 are worth evaluating for teams where sending proprietary code to a third-party API is a compliance or security concern.

- — AI coding tool vendors — the open-weights availability puts pricing and positioning pressure on closed-API coding tools. Differentiation will increasingly depend on product experience, integration depth, and enterprise support rather than raw model performance.

- — Developers building on open infrastructure — fine-tuning a 300B MoE model on your organisation's codebase was not practical at this performance level until now. MiniMax M2.7's architecture makes domain-specific fine-tuning more accessible than comparable dense models.

What To Do Now

- 1. Pull the benchmark data before your next coding tool evaluation. All four models have published SWE-bench scores. Compare them against the closed models you currently pay for on the same task categories your team actually uses — long-context refactoring, test generation, debugging — not just headline numbers.

- 2. Run a side-by-side on a real task from your codebase. Benchmark scores are a starting point. Spin up GLM-5.1 Flash or Kimi K2.6 locally and give them the same bug fix or feature ticket you'd give Claude Code or Copilot. The delta between benchmark and production is where the real decision lives.

- 3. Evaluate the self-hosting cost trade-off explicitly. Running a 300B MoE model requires significant GPU infrastructure. Calculate the total cost of ownership — compute, ops, maintenance — against your current API spend before concluding self-hosted is cheaper. For most teams under 50 engineers, managed APIs still win on total cost.

- 4. Watch the fine-tuning window. These models are open-weights precisely so they can be fine-tuned. If you have a substantial internal codebase with strong conventions, a fine-tuned open-weights coding model can significantly outperform a general-purpose closed model on your specific patterns. That use case was not practical at this performance level six months ago.

More on this topic — Best AI Coding Tools 2026

The AI Hustle Playbook Newsletter

Get the curated shortlist.

A playbook of AI tools and strategies for building income streams.

No spam. Unsubscribe anytime.

More from ToolNav News

Cactus Compute Drops a 26M Parameter AI Model That Runs at 6,000 Tokens/Second on Your Laptop

2026-05-12

China's Moonshot AI Raises $2B at $20B Valuation — Kimi Is Now the World's #2 LLM on OpenRouter

2026-05-08

HubSpot Launches Free AI Visibility Dashboard as Its Customers' Organic Traffic Falls 27%

2026-05-17